The AI generator museum of uncanny amusement

-

LivDiv wrote:Do you have a link to where I can try it?

I'm just picking the boy up from school but I'll post later. I've got some Google dev account that I use but I'm sure there's a way.

"Plus he wore shorts like a total cunt" - Bob -

There are some good tools coming but I think getting something presented as a full solution is a good way off.

I posted that Chaos video a few weeks back, the texture map creator they are doing will be fantastic, but thats 2D still.

Ive seen some thing that can fill in a background, e.g behind a building. That could be good. Things that are chaotic in nature lend themselves better to AI so plotting some trees in and some people running around a nice shiny skyscraper a person has hand built.

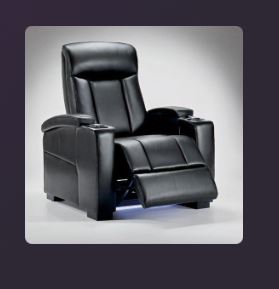

What Ive got up there is totally useless, and thats if I want a generic chair. If I want a specific model that will be sold as a product, that has to be accurate. Difficult. For reference I could make the real product I posted up there in a few hours, a casual afternoon while posting shit on here and watching Youtube. And Im not an especially great modeller of soft furnishings. -

"Plus he wore shorts like a total cunt" - Bob

-

First up 3Daistudio.

This one is image based, you can prompt an image to then use to create 3D but its locked behind a sub. Not an issue as I have the image I want so uploaded the product I posted.

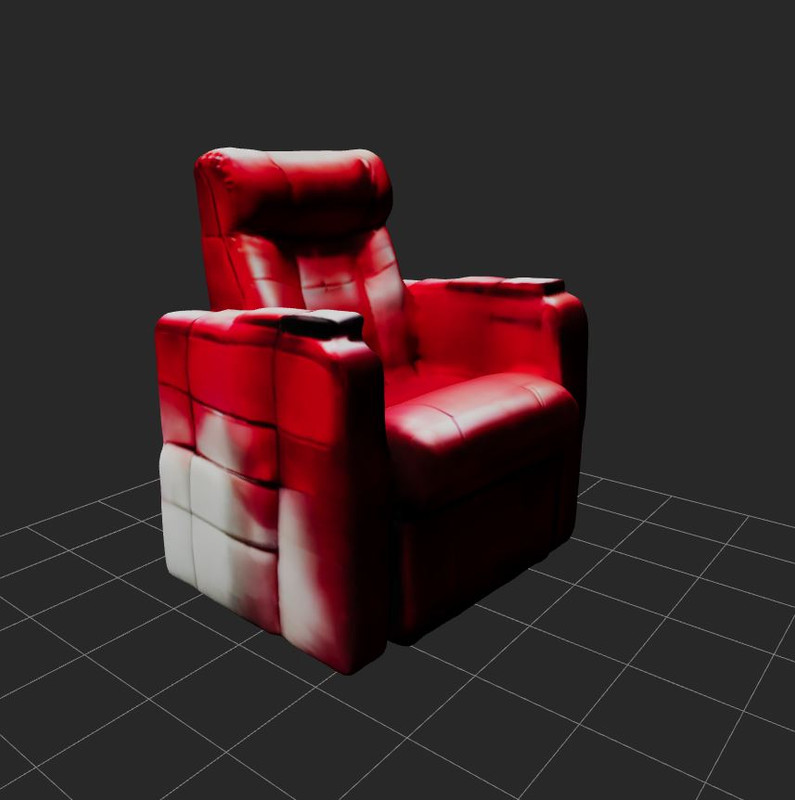

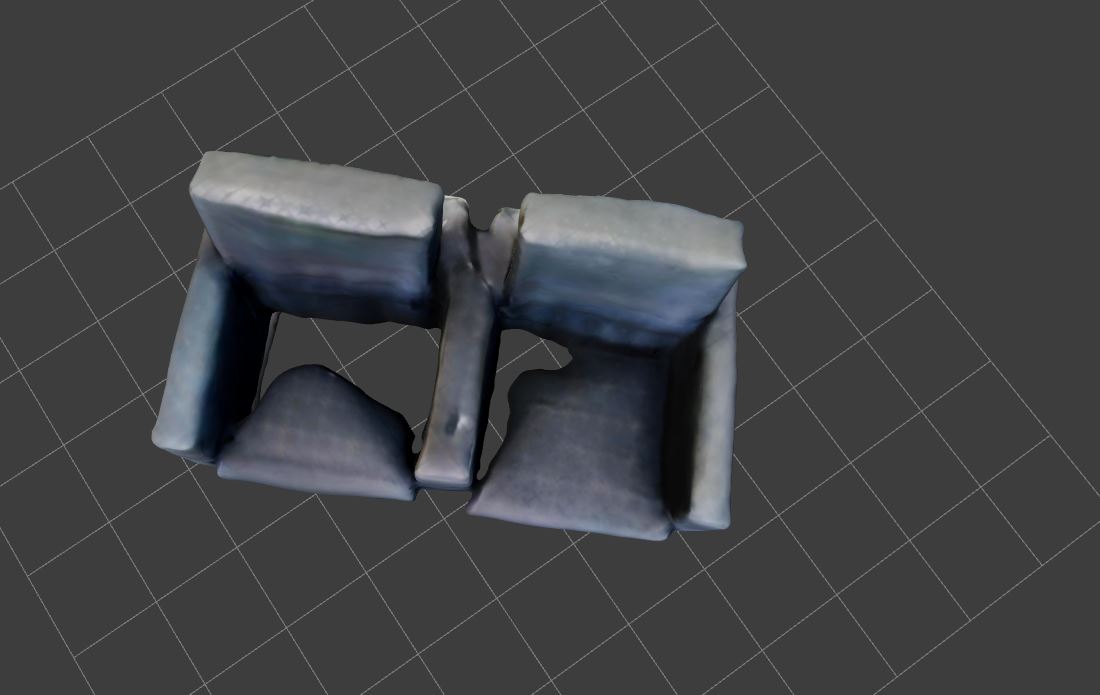

Result at first glance is ok, but still usless its made of chewing gum.

but wait...

-

Chamred next.

This one uses a prompt to find images as previews which can be selected.

Looked promising at first, nice preview.

Chewing gum again.

-

The Murder Chair

-

Bed's dead, baby. Bed's dead.

-

This has given me the best AI laughs since the golden age of shit DALL-E images. It’s magnificent. -

You can get a free 2 month trial Liv.

https://one.google.com/explore-plan/gemini-advanced

For design generation I've just noticed Gemini Apps is unavailable in the UK due to recent changes in the law. There will be ways around it, maybe VPN. Dunno much about designing in AI but it seems the stuff available to you in the UK/EU is now limited or just bad."Plus he wore shorts like a total cunt" - Bob -

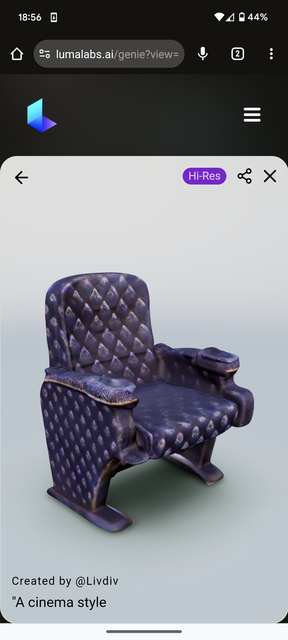

Dug a little deeper.

Seems Luma with Genie is the creme de la creme for text to 3D right now.

I think we can all agree this is a significant improvement to a meatcycle.

This is the refined High Res version that still looks "mushy".

Obviously I could spend longer on prompts to get something closer to what I wanted. The High Res took a while but previews were fast enough.

The major problem with all of these though is topology or the lack of. They are effectively point cloud models, like 3D scanning.

This makes them very heavy needlessly, mushy (heard that in this evening's research and I like it), impossible to manipulate in any meaningful way beyond scale/rotate/move.

It also means things like these chairs that are made up of components end up sort of blended together rather than having sharp lines, overlaps and contact shadows.

Materials would be tough too. Obviously I might want to ditch that horrific fabric, I couldn't do that easily. If I wanted chrome feet they would look like the inside of a scrunched up crisp packet rather than nice and smooth.

I could take that model and trace over it. It could maybe give me a head start on proportions if I had no dimensions available.

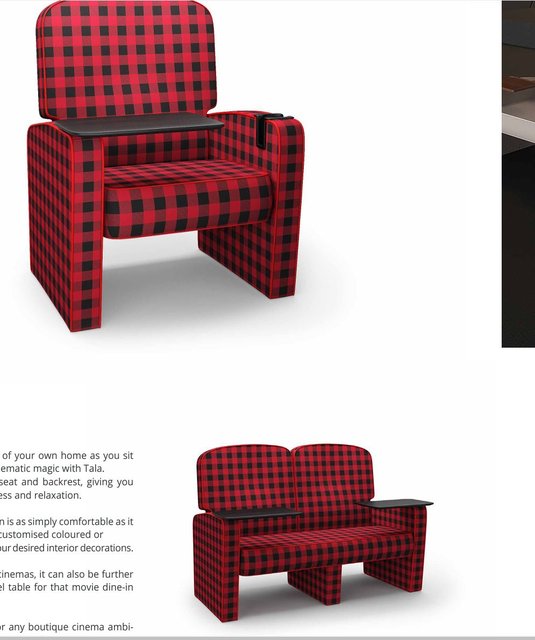

I could also use these as inspiration (although text to image would probably be better), and while that might seem mad for such a gross looking chair I have seen worse. Cinema seating is where design goes to die. -

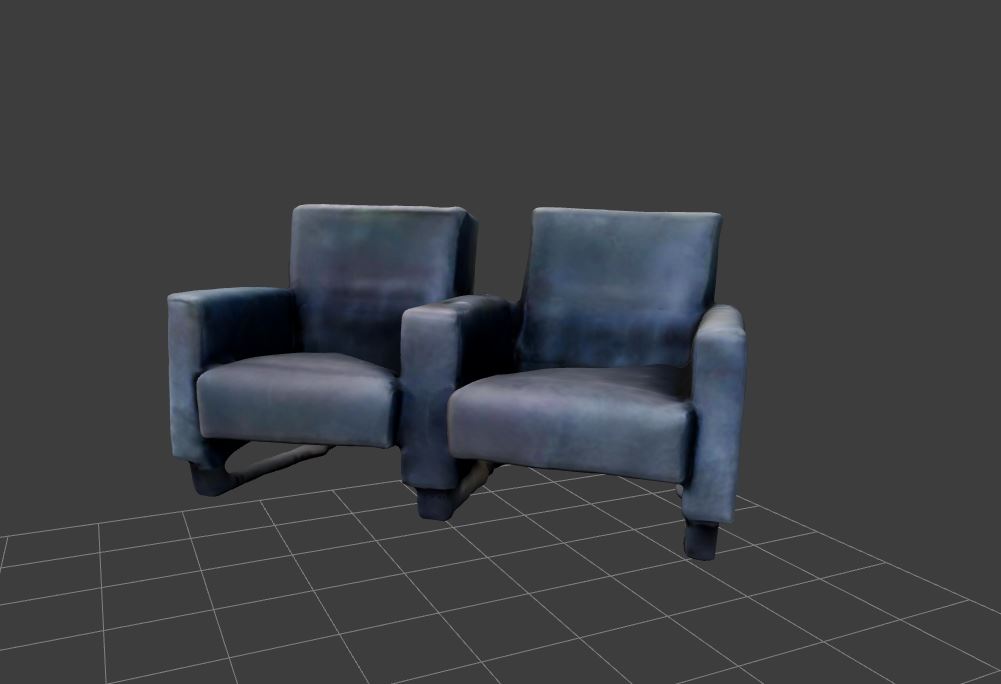

Example.

You know those VIP seats in Odeon cinemas, they are super comfy and don't look horrific. The company that makes those has this in their brochure.

-

AI prompting is a bit of a skill. Not massively, but a bit. I get AI to write code more now since my prompting skills are a little better. It can vastly reduce mistakes."Plus he wore shorts like a total cunt" - Bob

-

This is the nicest AI ‘art stuff’ I’ve seen yet. Feels like the current state of the tech is particularly suited to surrealism and more people should be using it for that.

https://www.instagram.com/x_new_worlds/ -

As designers get better at prompting and using AI, I think it'll benefit them. It'll make it easier for bad designers to produce better stuff but ultimately good talent will shine through if they skill up."Plus he wore shorts like a total cunt" - Bob

-

I follow a lot of comic artists and illustrators on the socials and they're going absolutely militant against this stuff. To be expected I suppose.

I don't reckon text prompt to image is the future of pro level illustration and design. Or maybe that, but in combination with rough layout prompts and better editing tools. -

I can imagine artists using AI for sketches and experiments.Holding the wrong end of the stick since 2009.

-

There'll be more exact control over the prompting with a better focus on tweaking. At the mo it's just one off prompts and hope for the best but it'll get refined quickly. You can build this AI into existing design software."Plus he wore shorts like a total cunt" - Bob

-

Short film made with Sora.

https://www.technologyreview.com/2024/03/28/1090266/how-three-filmmakers-created-soras-latest-jaw-dropping-videos/"Plus he wore shorts like a total cunt" - Bob -

Bills coming due: https://www.theregister.com/2024/04/03/stability_ai_bills/

Rumour is that OpenAI last year was spending over $700,000 a day to run ChatGPT alone (never mind the training etc for Sora and other R&D) -

Ouch! Sounds like incredibly bad management. As the compute needs accelerate there’ll only be space for the big boys.

-

Getting people to pay for this stuff could be a road block.

Coding eggheads are about to come up against what creatives have for years "do it for exposure", "my son knows Photoshop", a general perception that stuff that is fun to consume is fun to make so is it's own reward.

It's probably in the Limewire stage right now, jank, copyright infringing minefield. Will need to be iTunesed or Spotified. -

Also another problem for Stability is I’m guessing the majority of their users are just getting the open source models. I installed it on my PC without paying a cent. Compared to Midjourney who charge for everything now.

-

Yeah I managed to get it done locally, so no need to pay anything.

Howdy, Stranger!

It looks like you're new here. If you want to get involved, click one of these buttons!

Categories

- All Discussions2,718

- Games1,881

- Off topic837